Wow, it’s been a while1 since I posted one of these. We haven’t been resting on our laurels though – a bunch of work has been going on, and I want to highlight some of the big pieces that I’ve seen go by.

But first…

This Performance Update is brought to you by: getBoundsWithoutFlushing

For privileged JavaScript running in the browser, you have access to an interface called nsIDOMWindowUtils. These days, instead of doing a bunch of XPCOM gymnastics to get to that interface, you can access it via window.windowUtils. windowUtils exposes a handy function called getBoundsWithoutFlushing, and it delivers exactly what it says on the tin: you can pass it an element, and it’ll give you the most recently calculated bounds for the element without causing a style or layout flush.

That’s great! However, use with caution – because we’re getting information without flushing, the bounds information might be stale. For example, if you have an element that’s 50×50 pixels, and then were to apply some style in JavaScript that makes the element 500 pixels wide, using getBoundsWithoutFlushing immediately after seeing the style would still return the 50×50 pixel box. The information will only be brought up to date after the next flush, which either will occur from the refresh driver (good!) or some other code causing a layout flush (maybe bad!).

If you want a refresher on style and layout flushes, I highly recommend reading this document that the front-end team put together.

And now, without further ado, here’s what the Firefox Front-end Performance team’s been up to lately!

ClientStorage (Completed by Doug Thayer)

This is a big one if you’re on macOS. Doug’s work here allows us to communicate more efficiently with the GPU on Mac hardware, which should result in smoother animations, and hopefully less CPU (and power!) bandwidth being hogged with memory-copying operations.

This was so effective that it closed out the remaining performance bug on macOS that was preventing tab warming from landing! This means that tab warming should be shipping to our release channel on macOS in Firefox 63!

Experiments with the Background Process Priority Manager (In-Progress by Doug Thayer)

This project attempts to take advantage of our multi-process architecture by reducing the priority of processes that have no tabs being displayed in the foreground to the user2. This is the first time, at least to my knowledge, that we’ve attempted to fiddle with process priority on Firefox Desktop3, which means there are a bunch of unknowns for us to sort through.

Doug and I have been testing lowered background tab process priority for a few weeks, and have already identified one bug that has been recently fixed. Once that bug is available in Nightly builds, we’ll keep tinkering with it to see if any other bugs surface, and then we’ll considering testing it out on our Nightly audience.

If you want to experiment with it right now, you can go to about:config, and create a new bool pref called dom.ipc.processPriorityManager.enabled, and set it to true. Please be warned, this is still very much in the early stages, so you might see some odd behaviour.

Migrate consumers to the new Places Observer system (In-Progress by Doug Thayer)

Doug overhauled the Places Observer Notification APIs a month or so back, allowing consumers to take advantage of batches of notifications4. Doug is now in the process of converting a number of callsites within our Bookmarking code to take advantage of this. Once he puts out a few test failures and lands these patches, operations on large numbers of bookmarks should be handled more efficiently.

Document Splitting (In-Progress by Doug Thayer)

With our Graphics team getting closer and closer to making WebRender a reality, we’ve been looking at ways we can make our front-end code work more efficiently with it.

Disclaimer: I’m not 100% up-to-speed on the various nuances to this project, so I might get a few things wrong below. If someone from the Graphics team reads this and has some corrections or clarifications, please send them my way.

Document splitting will allow Gecko and WebRender to draw updates to the browser UI independently from web content. Historically, we’ve done something like this with layers and layer invalidation, but with WebRender, we have one giant display list that gets shipped over to the GPU thread to render for the whole window.

With document splitting, we’ll have independent structures for (at least for starters) the UI and web content. We suspect this will allow us to render more efficiently – especially when there’s a lot going on in web content (or there’s a lot going on in the UI!).

Make the RemotePageManager lazy (Completed by Felipe Gomes)

Felipe made it so that the RemotePageManager module isn’t loaded until necessary, and that saved us a handy 3.5% on content process start-up time, and 1% on base content memory used by JavaScript!

Smoother Tab Animations (In-Progress by Felipe Gomes)

The Photon UI shipped in Firefox 57 to great fanfare, and all of us front-end folk were pretty psyched about it. Unfortunately, as is always the case, there was some work that had to be cut for time.

Felipe is picking up some work that we cut that re-works how we do tab animations5. Our current animations involve growing and shrinking tabs, and for each frame of that animation, we calculate the change in style and layout and paint the change on the main thread.

The new animations were designed from the ground up to take advantage of compositor-accelerated CSS6.

Felipe has some early try builds that he’s posted with the new animations, and we’re pretty excited to see where it goes. Or, if you don’t want to try a build, you can check out this video. Or this video (it’s the previous video in slow motion). Or this video for a variation!

Overhauling about:performance (In-Progress by Florian Quèze)

Florian and Tarek Ziade have proven out the platform work to support the new about:performance, and are now trying to bang out the final bits to make the new about:performance something we actually want to ship. They’re working with our UX team to figure out exactly what that looks like, but we’re hoping ultimately to give the user the most informed picture possible on what is eating up their CPU cycles.

You can try the new about:performance today in Nightly by setting dom.performance.enable_scheduler_timing to true, then restarting the browser, and then visiting about:performance.

Browser Adjustment Project (In-Progress by Gijs Kruitbosch)

Informed by the Firefox Hardware Report, Gijs has been fitted out with some new hardware that we think helps to encapsulate what we consider to be “average” consumer hardware, and “weak” consumer hardware. Gijs has been focusing on prior art by other browser engines, as well as operating systems to see how best we can stand on the shoulders of friends and not re-invent the wheel.

Again, this is still an early-days research project, so no code’s been written yet, but we hope to have a clearer picture on how best to proceed soon.

Avoiding spurious about:blank loads in the parent process (In-Progress by Gijs Kruitbosch)

This work should allow us to avoid some unnecessary work when we create new windows and tabs. This has involved changing a very large number of tests, and doing a bunch of plumbing to get Firefox ready for this change. The dependency tree on the bug gives you a bit of the picture.

Thankfully, I think we’re approaching the home stretch on this one. Hopefully, this should buy us some precious milliseconds when starting up and opening new windows.

Enable the separate Activity Stream content process by default (In-Progress by Mike Conley and Jay Lim)

Enabling the separate Activity Stream content process will allow users to take advantage of the script caching work that my intern Jay Lim did a few months back, which should let us render about:newtab more quickly.

Unfortunately, turning this separate content process on by default has been plagued with problems – most recently, a shutdown leak when running our automated tests. Thankfully, we’ve recently made a breakthrough on the leak, and we’re working on eliminating the cause.

Cheaper tabs in titlebar (In-Progress by Mike Conley)

We run a bit of JavaScript when rendering the browser UI to figure out how exactly to lay out the tabs in the titlebar.

Unfortunately, this JavaScript involves synchronous style and layout flushes, and ultimately we’re doing calculations that’s best left solely to the layout engine.

I’m working on swapping out the JavaScript for raw CSS. Running the benchmarks locally, this saved anywhere from 16-20ms on the window opening Talos benchmark. That might not sound like a lot, but from a performance engineer’s perspective, that’s a pretty solid gain.

Grab bag of Notable Performance Work

And without further ado, here’s a bunch of miscellaneous work that’s gone into the browser recently that has helped make it faster and better! Kudos to all the folk who landed these things! A bunch of these fixes are going out in Firefox 63 and Firefox 64.

- Masayuki Nakano made scrolling in the Library and in any of our panels with a touchpad on Windows much smoother

- Ehsan Akhgari made it so that accessing the currentURI property on <browser>’s (which happens pretty often) is much cheaper

- Dan Glastonbury improved our performance on pages that set innerHTML and display:none on nodes one after the other

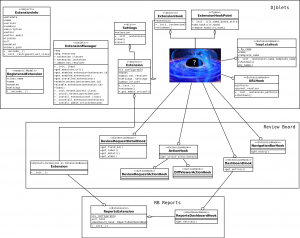

- Kris Maglione enabled out-of-process WebExtensions for Linux! This means that all desktop platforms will run WebExtensions out of process, which is great for responsiveness! Kris also landed a huge series of patches to move a bunch of our framescript code to a new “actor” model which is much easier for us to load lazily. Along with the content process start-up time and memory savings, this improved time to paint new windows by about 10%!

- Gijs Kruitbosch improved our performance on Windows when restoring very large sessions, and also got rid of a bunch of unnecessary code we were loading during start-up

- Tom Schuster improved the performance of our structured cloning code

- Mike Conley moved the metadata-writing that occurs on macOS after a file download off of the main thread

- Henri Sivonen used some fancy Rust code and made it so that most strings are converted much more quickly between UTF-8 and UTF-16. Time reductions are often around 30%, sometimes better than 50% and in the best case 90%!

- Brian Grinstead made it so that the “dropmarker” element in browser windows never needs to be checked for an XBL binding, which saves us some time on window opening

- Olli Pettay adjusted some event ordering to improve animation performance when transitioning to fullscreen

- Miko Mynttinen enabled Retained DisplayLists for the browser UI!

- Dan Holbert improved our reflow performance for flex items

- Felipe Gomes made it so that we don’t load Enterprise Policies until we absolutely need them

- Johann Hofmann got rid of a synchronous style flush we were doing when cancelling the Tracking Protection animation

- Doug Thayer made it so that we do less malloc-ing and hash-table look-ups when using CSS and SVG filters

Like… 2 months. Oof. The blog guilt is overwhelming! ↩

Across all windows, if you were worried about that. ↩

It looks like we did something like this for Firefox OS though, since I believe the infrastructure we’re using to do this comes from that project. ↩

Up until now, changes were handled one at a time. ↩

Check out the “new tab motions” attachment in this bug for some videos by our talented designer epang! ↩

Here’s a great post from some of our friends at Google about this sort of work. ↩