In my last post, I talked about an extension for Review Board that would allow users to register “defects”, “TODOs” or “problems” with code that’s up for review.

After chatting with the lead RB devs for a bit, we’ve decided to scrap the extension.

[audible gasp, booing, hissing]

Instead, we’re just going to put it in the core of Review Board.

[thundering applause]

Defects

Why is this useful? I’ve got a few reasons for you:

- It’ll be easier for reviewees to keep track of things left to fix, and similarly, it’ll be harder for reviewees to accidentally skip over fixing a defect that a reviewer has found

- My statistics extension will be able to calculate useful things like defect detection rate, and defect density

- Maybe it’s just me, but checking things off as “fixed” or “completed” is really satisfying

- Who knows, down the line, I might code up an extension that lets you turn finding/closing defects into a game

However, since we’re adding this to the core of Review Board, we have to keep it simple. One of Review Board’s biggest strengths is in its total lack of clutter. No bells. No whistles. Just the things you need to get the job done. Let the extensions bring the bells and whistles.

So that means creating a bare-bones defect-tracking mechanism and UI, and leaving it open for extension. Because who knows, maybe there are some people out there who want to customize what kind of defects they’re filing.

I’ve come up with a design that I think is pretty simple and clean. And it doesn’t rock the boat – if you’re not interested in filing defects, your Review Board experience stays the same.

Filing a Defect

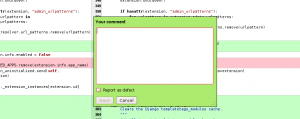

I propose adding a simple checkbox to the comment dialog to indicate that this comment files a defect, like so:

While I’m in there, I’ll try to toss in some hooks so that extension developers can add more fields – for example, the classification or the priority of the defect. By default, however, it’s just a bare-bones little checkbox.

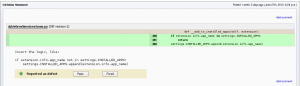

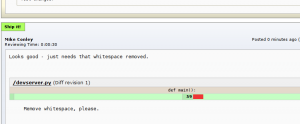

So far, so good. You’ve filed a defect. Maybe this is how it’ll look like in the in-line comment viewer:

Two Choices

A reviewer can file defects reports, and the reviewee is able to act on them.

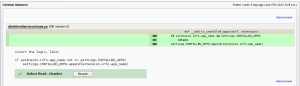

Lets say I’m the reviewee. I’ve just gotten a review, and I’ve got my editor / IDE with my patch waiting in the background. I see a few defect reports have been filed. For the ones I completely agree with, I fix them in my editor, and then go back to Review Board and mark them as Fixed.

It’s also possible that I might not agree with one or more of the defect reports. In this case, I’ll reply to the comment to argue my case. I might also mark the defect report as Pass, which means, “I’ve seen it, but I think I’ll pass on that”.

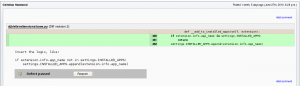

These comments and defect reports are also visible in the review request details page:

Thoughts?

What do you think? Am I on the right track? Am I missing a case? Does “pass” make sense? Will this be useful? I’d love to hear your thoughts.